What is Thermography?

Thermography is the capture and subsequent analysis of heat images for the purpose of detecting temperature variations between or within objects.

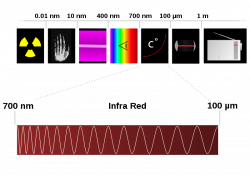

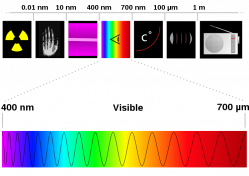

The word thermography derives from the Greek terms thermo, meaning heat, and graph, meaning drawing. All objects with temperatures above absolute zero emit infrared radiation in the form of heat. Infrared means, literally, below red, and it refers to radioactive emissions that are beneath the visual spectrum. Infrared energy is a part of the electromagnetic spectrum that includes gamma rays, x-rays, ultra violet, visible light, infrared, terahertz waves, microwaves, and radio waves. As a general rule, the warmer the object, the more of one of these types of radiation it emits. A thermal-imaging camera is able to detect these radioactive heat emissions in much the same way that a regular camera detects visual images. It then renders these heat images in the visible spectrum so they can be viewed and analyzed.

The word thermography derives from the Greek terms thermo, meaning heat, and graph, meaning drawing. All objects with temperatures above absolute zero emit infrared radiation in the form of heat. Infrared means, literally, below red, and it refers to radioactive emissions that are beneath the visual spectrum. Infrared energy is a part of the electromagnetic spectrum that includes gamma rays, x-rays, ultra violet, visible light, infrared, terahertz waves, microwaves, and radio waves. As a general rule, the warmer the object, the more of one of these types of radiation it emits. A thermal-imaging camera is able to detect these radioactive heat emissions in much the same way that a regular camera detects visual images. It then renders these heat images in the visible spectrum so they can be viewed and analyzed.

The History of Thermograpy

The infrared spectrum upon which thermography is based was discovered by Sir William Herschel in 1800. Sir William was the Royal Astronomer to King George of England, and another of his notable achievements was the discovery of Uranus. He was aware that sunlight could be separated into chromatic components—colors—through a glass prism, and he decided to further investigate this phenomenon by measuring the temperatures of these various refracted colors. It was his hope to discover a lens that would allow direct observations of the sun. This he was unable to do, although an unintended end result of his experiment was the discovery of the thermal spectrum. He named his discovery dark heat, because it could not be seen with the naked eye.

In 1830, an Italian researcher named Macedonio Melloni followed up on Sir William’s work and discovered that rock salt crystals produced consistent refractions of the thermal spectrum. This discovery led the way toward standardization of infrared research. In 1840, Sir John Herschel—Sir William’s son—was the first person to capture a thermographic image on paper. Further improvements in infrared detection came slowly throughout the remainder of the nineteenth century. The next major advance in the science came in 1880, when Samuel Langley invented the bolometer, which allowed researchers to measure electromagnetic radiation. Then, in 1892, Sir James Dewar introduced the use of liquefied gasses to increase the sensitivity of infrared detectors.

By the time of World War I, scientists on both sides of the conflict had begun to discover the military uses for infrared detection and thermography, thus most of the work in the field became classified and remained so through the end of the war. Even though there were no papers published during the war years, there were anecdotal reports on both sides of thermography and infrared detection being used for the location of enemy soldiers, vehicles, and aircraft. After the war, research into the peacetime uses for the technology began again, and it was during the period between the world wars that the next major development was made. In 1935, a German company—Allgemeine Elektrizitäts-Gesellschaft (General Electricity Company)—invented night-vision technology. This development had obvious military applications, so the technology was classified by both the axis and allied powers, and all subsequent refinements and improvements remained so until 1955.

Beginning in the early 1960’s thermographic technology began to find uses in the civilian sector. Early industrial models were large, inaccurate, and expensive, and their use was limited to only the biggest of companies. By the 1990’s, however, the technology had advanced sufficiently so that the new generation of thermal-imaging devices were smaller, extremely reliable, and much more affordable. Now, many small and medium-sized firms employ the technology, as well as a large percentage of fire departments.

In 1830, an Italian researcher named Macedonio Melloni followed up on Sir William’s work and discovered that rock salt crystals produced consistent refractions of the thermal spectrum. This discovery led the way toward standardization of infrared research. In 1840, Sir John Herschel—Sir William’s son—was the first person to capture a thermographic image on paper. Further improvements in infrared detection came slowly throughout the remainder of the nineteenth century. The next major advance in the science came in 1880, when Samuel Langley invented the bolometer, which allowed researchers to measure electromagnetic radiation. Then, in 1892, Sir James Dewar introduced the use of liquefied gasses to increase the sensitivity of infrared detectors.

By the time of World War I, scientists on both sides of the conflict had begun to discover the military uses for infrared detection and thermography, thus most of the work in the field became classified and remained so through the end of the war. Even though there were no papers published during the war years, there were anecdotal reports on both sides of thermography and infrared detection being used for the location of enemy soldiers, vehicles, and aircraft. After the war, research into the peacetime uses for the technology began again, and it was during the period between the world wars that the next major development was made. In 1935, a German company—Allgemeine Elektrizitäts-Gesellschaft (General Electricity Company)—invented night-vision technology. This development had obvious military applications, so the technology was classified by both the axis and allied powers, and all subsequent refinements and improvements remained so until 1955.

Beginning in the early 1960’s thermographic technology began to find uses in the civilian sector. Early industrial models were large, inaccurate, and expensive, and their use was limited to only the biggest of companies. By the 1990’s, however, the technology had advanced sufficiently so that the new generation of thermal-imaging devices were smaller, extremely reliable, and much more affordable. Now, many small and medium-sized firms employ the technology, as well as a large percentage of fire departments.

Thermography's Uses and Applications

Modern thermographic cameras and sensors and infrared thermal-imaging are used in a large number of applications. These include radio astronomy, military applications, night-vision, security applications, non-destructive testing, research, predictive industrial maintenance, medical testing and imaging, moisture detection, mammography, firefighting, construction, utilities maintenance, leak detection, veterinary medicine, automotive maintenance, pollution detection, archaeology, condition monitoring, chemical imaging, mining, archaeology, building inspection, geology, and heating and air-conditioning applications.

Types of Thermography

The two main types or divisions of thermography are active thermography and passive thermography. In active thermography, an energy source is trained upon an object to produce a thermal contrast between it and its ambient surroundings. Passive thermography, on the other hand, detects differences in temperature between an object and its background. The Flir T-200 is an uncooled, passive detector.

Advantages of Thermography

There are many advantages to using thermal-imaging. In the industrial setting, it can be used to diagnose a component that is in failure mode but that has not yet failed. This predictive function allows companies to replace failing machinery and parts before these components interrupt the manufacturing process. Another advantage is that the results are visual rather than chemical or mathematical, and thus they are easier to interpret. Also, thermal imaging is a non-destructive technique, which means that it is not necessary to disassemble a component in order to evaluate it. And finally, the technique is useful for observations of components that should not be approached due to the dangers inherent in doing so.

Additional Applications of Thermography

Slideshow credited to Finditfixit.com

Last updated 4 March, 2010. Kennesaw State University MAPW. Information for academic purposes only.